|

If you are having boot or display issues on your computer, you are likely to come across recommendations about clearing the CMOS. But what does CMOS do, and how is it related to BIOS? Here’s everything you need to know. BIOS Configuration Storage The term CMOS, pronounced as “sea-moss,” is commonly used to describe a small memory on a computer motherboard that stores BIOS or UEFI settings, including system date, time, and hardware configuration. CMOS is short for Complementary Metal-Oxide-Semiconductor, which is a type of fabrication process used in the construction of various integrated circuit (IC) chips, including the memory chip being used to store the BIOS settings. It is also referred to as CMOS RAM, BIOS RAM, and non-volatile BIOS memory. While CMOS is most commonly associated with Windows computers, Macs have a similar memory, and it’s called NVRAM or PRAM. How Are BIOS and CMOS Related? To understand the relation between BIOS and CMOS, it helps to know the role of a BIOS in your computer. BIOS, short for Basic Input-Output System, is a firmware that comes with your computer’s motherboard. It’s responsible for initializing your computer’s hardware, testing it, and booting up the operating system when you hit the power button. However, as BIOS is stored in read-only memory, it can’t save any changes you make to its configuration, such as tweaks to the boot order or overclock settings. These changes, along with the system date and time, are saved in CMOS. So CMOS can be essential to your computer’s stable functioning. And if your CMOS is cleared or its battery fails, you lose all your BIOS tweaks, and it reverts to the default settings. What Is a CMOS Battery? The CMOS battery is usually a CR2032 lithium coin battery on your computer’s motherboard. It was traditionally used to power the CMOS as older motherboards used a volatile memory to store the BIOS settings. Volatile memory loses data when it loses power. So a battery was used to provide power to the memory even after you had shut down the computer or removed the power cord. However, the modern UEFI motherboards use a non-volatile memory or NVRAM to store UEFI configuration data. The non-volatile memory can retain data even without applied power. That said, CMOS batteries are still used to keep time in your computer. And since the removal or failure of CMOS battery has traditionally been associated with clearing CMOS data, modern motherboards also reset UEFI settings when CMOS battery fails. Most CMOS batteries last anywhere between two to 10 years, depending on the computer usage. But if your computer is showing the wrong system time or date after a reboot or you are facing hardware compatibility issues, it may be time to replace the CMOS battery. Other key symptoms of a failed CMOS battery include problems in the boot process and constant beeping noise from the motherboard. You may also get errors, such as “CMOS Read Error,” “CMOS Battery Failure,” and “CMOS Checksum Error.” How to Clear CMOS There are occasions when you may have to clear your computer’s CMOS to reset BIOS or UEFI settings. However, it’s a relatively straightforward process, and depending on whether or not you have access to BIOS or UEFI menu, there are a few ways to achieve this. One of the easiest ways to clear CMOS is by going to the BIOS or UEFI settings and choosing the reset option. It’s typically called “Load factory defaults” or “Load setup defaults.” You can also use the CMOS clear button if available on your motherboard. This button can either be found on the IO plate or near the CMOS battery. In addition, reseating the CMOS battery is another way to clear the CMOS data. But you will need to open your computer and access the motherboard to reseat or replace the battery. But don’t worry. You can find detailed instructions and more ways to clear your computer CMOS in our detailed guide.

0 Comments

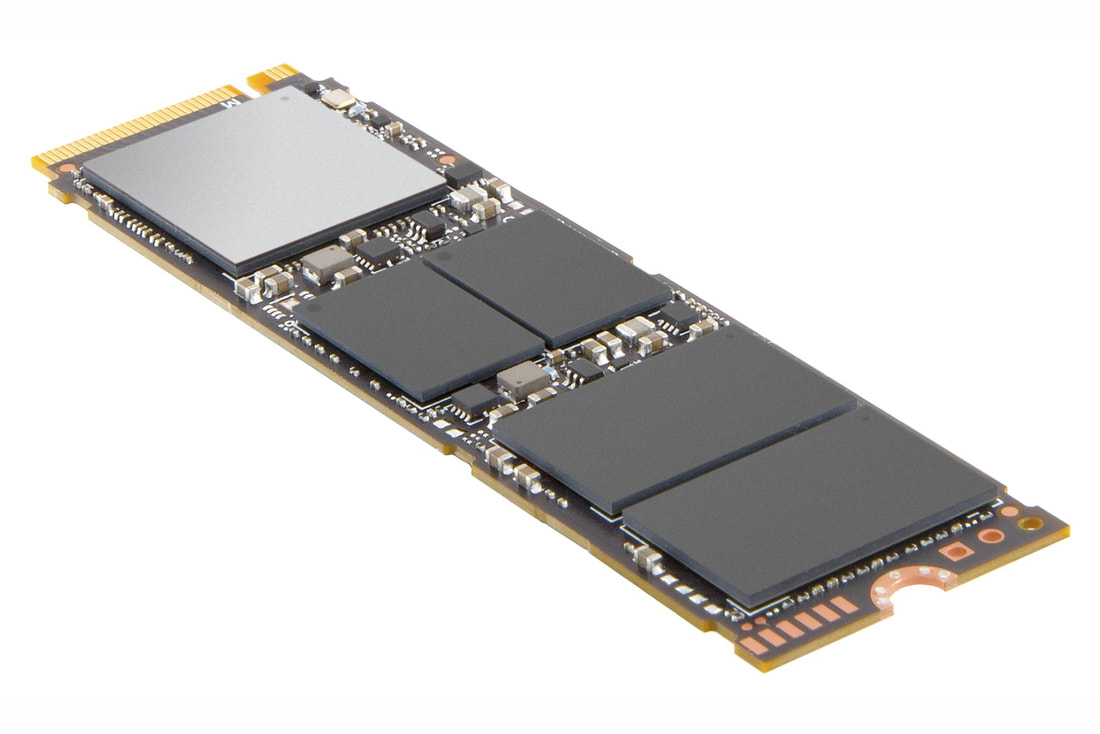

When shopping for a solid-state drive or SSD, you are bound to come across a term called TBW. It represents the endurance level of an SSD. Here’s why it matters and how you can understand it. SSD Endurance Metric: TBW, or “Terabytes Written,” is a metric that tells you how much data you can cumulatively write to an SSD over its lifetime. As the name suggests, this metric is given in the number of terabytes. So, for example, if an SSD has 350 TBW, you can write a total of 350TB to it before you may have to replace it. Overall, TBW gives you an idea about the endurance of a solid-state drive. Notably, TBW is sometimes also referred to as Total Bytes Written as several enterprise-grade SSDs now have the TBW rating in petabytes. Why Does TBW Matter? The TBW number is important for SSDs because they have a finite life. SSDs store data in flash memory cells. And while reading data from these cells doesn’t impact them, they degrade each time they are erased and written. Eventually, the flash memory cell is so degraded that it fails. So, TBW essentially tells you how much data you can write before the memory cells become unreliable. TBW rating is higher for larger capacity drives as they have more flash memory cells to write. For example, a typical 500GB SSD has a TBW of around 300, whereas 1TB SSDs usually have 600 TBW. Also, enterprise-grade SSDs have higher TBW than consumer-grade SSDs. However, there are premium consumer-grade SSDs that come with far higher TBW than typical SSDs. Should You Care About TBW? Although TBW is a reliable indicator of an SSD’s endurance, most regular computer users will never reach TBW during the normal lifetime of a drive. So unless you are writing hundreds of gigabytes of critical data each day, you don’t have to worry about TBW. Also, TBW mostly matters for the internal SSDs, as the external SSDs are primarily used for data backup and they are not written to as frequently as the internal SSDs. That said, higher endurance is a plus if you are shopping for a new SSD. SSDs with a higher TBW rating typically cost more than SSDs with a lower rating. So, it’s a good idea to take a balanced approach because if you choose too low endurance, you may have trouble in the long run. What Is DWPD? DWPD, or Drive Writes Per Day, is another term that is used to describe SSD endurance. However, as its name suggests, it tells how many times you can overwrite the entire size of an SSD daily for a specific warranty period. So, for example, if your 1TB SSD is rated 1 DWPD, it can handle 1TB of data written to it every day over its warranty period. But if its DWPD is 10, it can withstand 10TB of data written to it daily. How to Check the TBW of an SSD: If you are curious about the remaining lifespan of an SSD, you can look for the total amount of data written to it and then compare it with the SSD’s TBW. The official software shipped with the SSD typically shows this information under its SMART (Self-Monitoring, Analysis, and Reporting Technology) function. You will have to look for something like “Data Units Written” or “Total Host Writes.” Depending on the SSD usage, this number could be in gigabytes or terabytes. You can convert it to terabytes for each comparison with the TBW. So, for example, if your SSD’s total “Data Units Written” is 101TB and its TBW is 300, then the SSD has about two-thirds of its life left. Apart from the official SSD software, you can also use CrystalDiskInfo on Windows or DriveDX on Mac to find the total data written to your SSD. While CrystalDiskInfo is free to download, DriveDX is a paid software, but it comes with a 15-day free trial. What Happens After an SSD Crosses It's TBW? Once an SSD crosses its TBW, it isn’t completely useless or dead. You can still read the stored information, but you may face issues writing more data. However, typically SSDs overperform on the TBW front because manufacturers are pretty conservative while assigning TBW ratings. That said, once SSD’s SMART function determines that there are no longer any writable blocks in the SSD or it’s nearing failure, it will lock the drive to a read-only mode. In this mode, you can no longer write data, but you will be able to read the stored information and transfer it to another SSD or HDD. Windows 10 version 21H2 update was released in November 2021 and Microsoft has confirmed the update is now widely available. This means most users are seeing it when they check for updates manually. If you don’t see the update, you can download ISOs of Windows 10 (version 21H2) to update devices immediately or perform a clean install. To download ISO file for Windows 10 November 2021 Update (version 21H2) from Microsoft’s website, click on the direct link below. NVMe (Non-Volatile Memory Express) and SATA (Serial ATA) are interfaces between your SSD and the rest of your computer. SATA arrived in 2003 and was instrumental in helping modern HDDs increase their transfer speeds. SATA was later used in SSDs to communicate between the drive and the rest of the system. As such, there are SATA HDDs and SATA SSDs. NVMe, on the other hand, is a newer interface and was solely created for use in SSDs. NVMe SSDs use the Peripheral Component Interconnect Express (simply known as PCIe) bandwidth, a general-purpose interface standard found on motherboards for connecting high-speed components like graphics cards and SSDs.

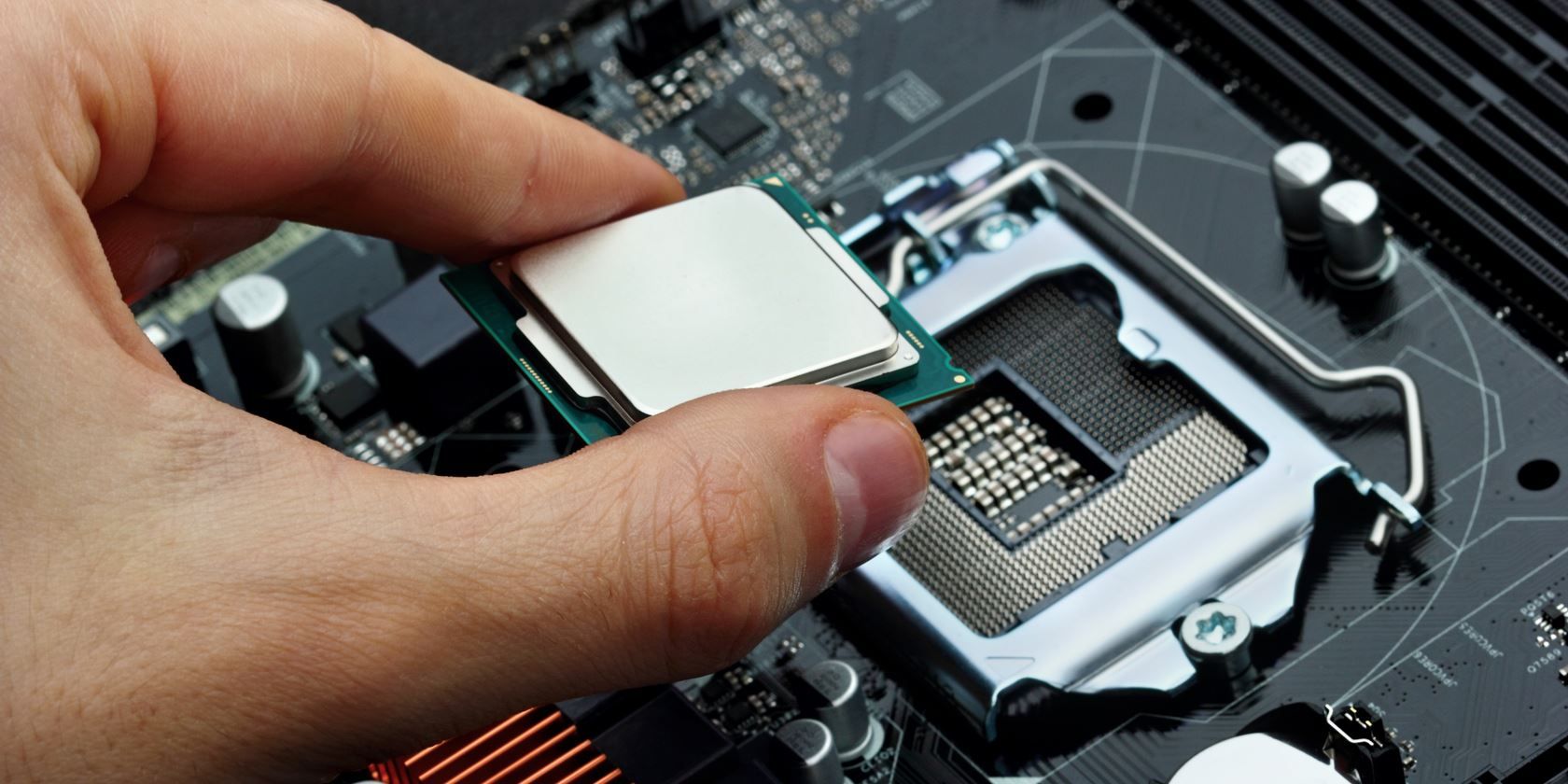

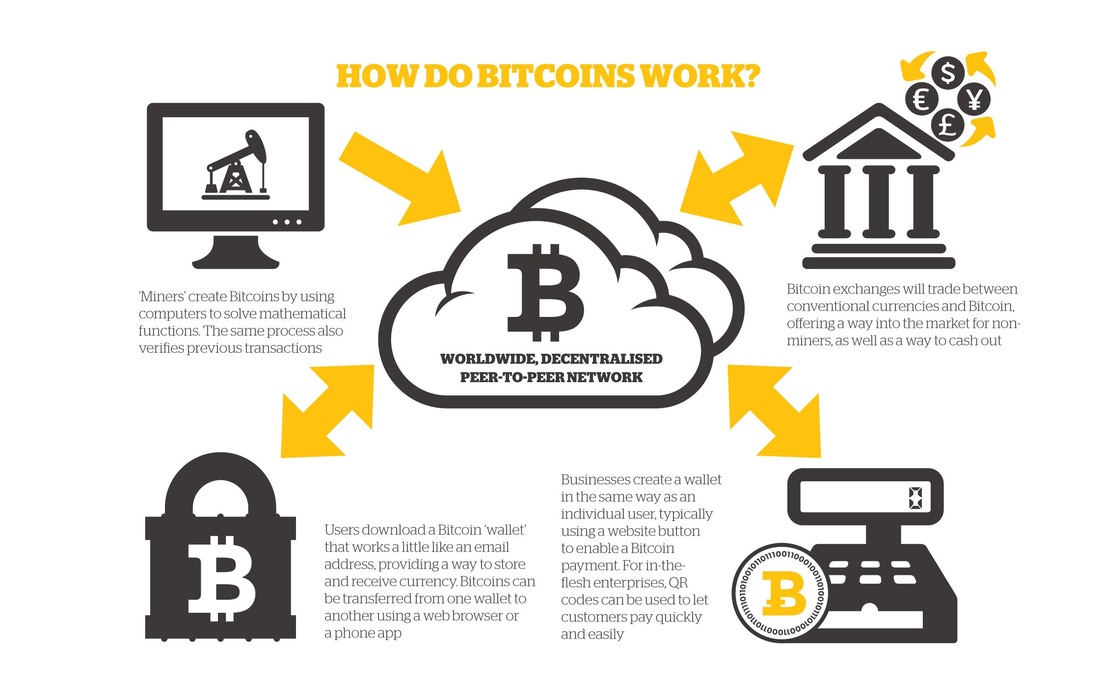

Under PCIe, there are two standards. They include AHCI (Advanced Host Controller Interface) and NVMe. AHCI is older and was created for use in HDDs but later utilized in SSDs. On the other hand, NVMe is the newer and better standard made solely for use in SSDs. NVMe delivers faster performance and is one of the key reasons why NVMe SSDs are more expensive than their SATA counterparts for the same amount of storage. However, although NVMe SSDs are faster, there are reasons why you should stick to SATA SSDs instead of jumping straight to the NVMe standard. Depending on the interface used, you'll see SSDs labeled as SATA or PCIe. There are different factors to consider when choosing between SATA and PCIe SSDs. As mentioned previously, PCIe SSDs can use the older AHCI driver or the newer NVMe driver. But if speed is all you care about, then pick an NVMe over a PCIe SSD with the AHCI driver. Also, keep in mind that the maximum transfer speeds vary depending on the PCIe generation. M.2 Is an SSD Form Factor: Aside from NVMe and SATA, M.2 is also a common term in the SSD space. But what is an M.2 SSD? Simply put, an M.2 SSD is an SSD in the M.2 form factor. M.2 is what was previously called the Next Generation Form Factor (NGFF). While NVMe SSDs exclusively use the M.2 form factor, SATA SSDs are available in the standard 2.5-inch form factor and the smaller and slimmer M.2 form factor. Most modern SATA SSDs come in the 2.5-inch form factor. However, you'll find M.2 SSDs in ultrathin laptops, tablets, and mini PCs. M.2 was developed by the SATA International Organization and a consortium of industry players. It is often referred to as a replacement for mini Serial Advanced Technology Attachment (mSATA) SSDs. Although mSATA is older, you can still buy SSDs with the interface off the shelf. In conclusion, there are different types of M.2 SSDs, including SATA SSDS, PCIe NVMe SSDs, and PCIe AHCI SSDs. So, remember that M.2 only tips on the form factor and doesn't tell you much about the interface used, which is equally, if not more important. Understand NVMe, SATA, and M.2 SSDs: You'll come across many insider terms when shopping for an SSD. However, don't let all of that jargon confuse you. As detailed above, the main difference between NVMe and SATA SSDs is the interface used—NVMe uses a PCIe interface while SATA SSDs use a SATA interface. On the other hand, M.2 is an SSD form factor often used to incorporate high-performance storage in high-end gaming rigs, ultra-portable laptops, and tablets. You can get SATA and PCIe SSDs in the M.2 form factor. More often than not, these terms are combined. You'll find someone talking about their new M.2 NVMe SSD or M.2 SATA SSD. What Is A computer? A computer is an electronic device that accepts data as input, processes data into information, stores information for future uses, and outputs the information whenever it is needed. A Brief History: Computers, by a wider definition, have been around for thousands of years. One of the earliest computers was the abacus, series of beads arranged on metal rods. Beads could be slid back and forth to operate on numbers. This was a very simple device and is not commonly thought of as a computer in modern times. Our idea of computers involves electricity and electronics. Electricity makes computers much more efficient. The first computers used an incredible amount of electricity, which changed voltages in vacuum tubes to operate the computer. These computers were given instructions using punch cards, and were huge, taking up entire floors of buildings. Only the more privileged universities and government facilities had access to them. In the 1960’s, the vacuum tube was replaced by the integrated circuit and transistor. These greatly reduced the size and power consumption of computers. They were still very large by today's standards, but more institutions had access to computing power than ever before. At the end of the decade, the microchip was invented, which reduced the size of the computer even more. By the end of the 1970’s, computers were widespread in businesses. Using a computer involved typing on a terminal (a keyboard and monitor connected to a large central computer). Soon, parts became small enough to allow many users to have a computer at their home. Thus the Personal Computer, or PC, was born. Since then, personal computers have become tremendously more efficient. They are much smaller, and yet have seen extreme performance gains. In addition to these improvements, computers have become affordable enough for many families worldwide. Hardware: Hardware is the stuff you can touch, as opposed to software which is abstract and exists only in a virtual world as computer code. Hardware is made of materials found in the universe and are subject to the laws of physics. Contrary to the latter, software is bound only by the creator's imagination and the user's willingness to use the software. The Insides: Inside the computer case are various components that allow the computer to run. CPU: The central processing unit (CPU), also called a processor, is located inside the computer case on the motherboard. It is sometimes called the brain of the computer, and its job is to carry out commands. Whenever you press a key, click the mouse, or start an application, you're sending instructions to the CPU. The CPU is usually a two-inch ceramic square with a silicon chip located inside. The chip is usually about the size of a thumbnail. The CPU fits into the motherboard's CPU socket, which is covered by the heat sink, an object that absorbs heat from the CPU. A processor's speed is measured in megahertz (MHz), or millions of instructions per second; and gigahertz (GHz), or billions of instructions per second. A faster processor can execute instructions more quickly. However, the actual speed of the computer depends on the speed of many different components - not just the processor. Memory: RAM (Random Access Memory), commonly called just memory, holds computer code that needs to be operated on quickly. This allows information held in memory to quickly interact with the CPU. The amount of RAM available is limited and therefore needs to be constantly cleared and refilled (don't worry; all computers do this automatically). RAM is just one part of the computer that determines your speed. RAM is referred to as "volatile" memory because the information stored in it disappears when the power is turned off. Hard drives and flash drives, on the other hand, contain non-volatile memory, which is like paper: it can be destroyed or erased, but when properly taken care of, can last forever. RAM is plugged into special slots on the motherboard. There is a large link (known as a bus) from the memory to the CPU. Each motherboard has a fixed number of slots for RAM - often 2 or 4 slots. Only certain types of RAM and sizes of RAM can be used with any motherboard. So before buying, check your motherboard details. Motherboard: The motherboard is the computer's main circuit board. It's a thin plate that holds the CPU, memory, connectors for the hard drive and optical drives, expansion cards to control the video and audio, and connections to your computer's ports (such as USB ports). The motherboard connects directly or indirectly to every part of the computer. Power Supply Unit: The power supply unit in a computer converts the power from the wall outlet to the type of power needed by the computer. It sends power through cables to the motherboard and other components. Hard Drive: ★ Bit - All computers work on a binary numbering system ★ Byte - A byte consists of eight bits ★ Kilobyte - A kilobyte (KB) consists of 1024 bytes ★ Megabyte -A megabyte (MB) consists of 1024 kilobytes ★ Gigabyte - A gigabyte (GB) consists of 1024 megabytes ★ Terabyte – A terabyte (TB) consists of 1024 gigabytes The hard drive is the main storage area in the computer. It is usually where you put your data to be stored permanently (until you choose to erase it). It keeps data after the power is turned off. The official name for a hard disk is Hard Disk Drive (HDD), but is almost always referred to as hard drive. Virtually all of your data is stored on your hard drive. A hard drive is composed of disk(s), where the data is recorded magnetically onto the surface, similar to records, CDs, and DVDs. The size of the hard drive (todays are usually in gigabytes) is determined by how dense (small) the recording is. Many of today's major programs (such as games and media creating and editing programs like Photoshop) and files (such as pictures, music, or video) use a considerable amount of space. Most low-end computers, as of 2011, are shipped with a 160GB (gigabyte) or larger hard drive. Currently, in 2019, it is common to find desktop computers and laptops with 1000GB (1 Terabyte) hard drive or more. As an example, an average .mp3 file takes between 7.5 and 15MB (megabytes) of space. A megabyte is 1/1024th of a gigabyte, thus allowing most new computers to store thousands of such files. SSD: The SSD, or otherwise known as a Solid-State Drive, is a storage device using RAM modules instead of a spinning disk. It is like a Hard Disk Drive, but these storage devices are much faster than traditional Hard Disk Drives (HDD's) because they don't have to spin up. The SSD can have transfer speeds up to 10x as fast because of this. They are quieter and more expensive than HDD's. USB tech can be found in at least one or more devices people use on a daily basis. However, USB cables come in a variety of connections, most of which are incompatible with the others. This makes replacing a USB cable a troublesome task, especially when the differences between each may seem trivial to the inexperienced. For instance, while micro B and mini USBs may use synonymous terms, you cannot simply use one plug to connect to the other's port. To make matters even more confusing, the USB tech industry is constantly evolving that even the same plug type can differ between each version of USB, simultaneously influencing the plug's performance. Below is a very useful reference guide that discusses the different types of USB cables on the market. USB Type AAlso known as USB standard A connector, the USB A connector is primarily used on host controllers in computers and hubs. USB-A socket is designed to provide a downstream connection intended for host controllers and hubs, rarely implemented as an upstream connector on a peripheral device. This is because a USB host will supply a 5V DC power on the VBUS pin. Though not that common, USB A Male to A Male cables are used by some implementers to make connections between two USB A style Female ports. Be aware that typical A to A cables are not intended for connection between two host computers or computer to hub. USB Type BAlso known as USB standard B connector, the B style connector is designed for USB peripherals, such as printer, upstream port on hub, or other larger peripheral devices. The primary reason for the development of USB B connectors were to allow the connection of peripheral devices without running the risk of connecting two host computers to one another. USB B type connector is still used today, though it is slowly being phased out in favor of more refined usb connector types. USB Type CUSB-C or USB Type-C connector is the newest USB interface came to the market along with the new USB 3.1 standard. Different from previously mentioned USB A type and B type connector, USB C-Type connector can be used on both host controller ports and devices which use upstream sockets. In the last few years a numbers of laptops and cellphones have appeared on the market with C style USB connectors. USB Type C connector is compatible with USB 2.0, 3.0, 3.1 Gen 1 and Gen 2 signals. A full feature USB 3.1 Gen 2 C to C cable is able to transmit data at maximum 10 Gbps with enhanced power delivery of up to 20V, 5A (100W) and to support DisplayPort and HDMI alternate mode to transfer video and audio signal. USB Mini BSimilar to USB B type connector, USB mini B sockets are used on USB peripheral devices, but in a smaller form factor. The mini B plug by default has 5 pins, including an extra ID pin to support USB On-The-Go (OTG), which allows mobile devices and other peripherals to act as a USB host. Initially, this plug was designed for earlier models of smartphones, but as smartphones have become more compact and with sleeker profiles, the Mini USB plug has been replaced by the micro USB. Now, the Mini-B is designed for some digital cameras while the rest of the mini plugs series have become more of a legacy connectors as they are no longer certified for new products. USB Micro BThe micro USB B connector essentially a scaled down form of the mini USB which allowed mobile devices to get slimmer while still maintaining the ability to connect to computers and other hubs. The micro B type connector holds 5 pins to support USB OTG, which permits smartphones and other similar mobile devices to read external drives, digital cameras, or other peripherals as a computer might. Note that to enable OTG feature, special wiring connection needs to be implemented in the cable assembly. On Oct. 22, 2009, the international Telecommunication Union (ITU) announced to include Micro-USB interface into the Universal Charging Solution (UCS) that has been adopted broadly by industry. USB 3.0 Type AInheriting the same design to the A-Type connector used in USB 2.0 & USB 1.1 application, USB 3.0 A is also provides a "downstream" connection that is designed for use only on host controllers and hubs. However, USB 3.0 Type A processes additional pins that are not in the USB 2.0 A Type. USB 3.0 connector is designed to support 5Gbps bandwidth "SuperSpeed" data transfer, whereas, lower data rate can be transmitted with backward compatibility to USB 2.0 ports. USB 3.0 connectors are often in blue color or with "SS" logo to help distinguish them from previous generations. USB 3.0 Type BUSB 3.0 B-Type connector is designed for USB peripherals, such as printer, upstream port on hub, or other larger peripheral devices. This connector can support USB 3.0 SuperSpeed application and also carry USB 2.0 low speed data in the same time. A USB 3.0 B plug cannot be plugged in to a USB 2.0 B socket due to its plug shape change. However devices with USB 3.0 Type B receptacles can accept mating with previous USB 2.0 B Type male plugs. USB 3.0 Micro BAlso referenced as the SuperSpeed Micro USB B connector, this connector stacks five more pins on the side of the USB 2.0 Micro B connector to achieve the full USB 3.0 standard data transfer speed. USB 3.0 Micro B connectors are found on hard drives, digital cameras, cell phones, and other USB 3.0 devices. A USB 3.0 Micro B male connector cannot be plugged in to a USB 2.0 B socket due to its plug shape change. However devices with USB 3.0 Micro B receptacle can accept mating with previous USB 2.0 Micro B male plug. With the growing need of higher data transfer rates, more industrial applications such as Machine Vision and 3D imaging are starting to implement USB 3.0 Micro B into their system designs. Screw lock Micro B connectors are often used in cabling to ensure secure interconnection. USB 3.0 Internal Connector (20 Pin)Developed by Intel, USB 3.0 internal connector cables are usually used to connect the external USB SS ports on the front panel to the motherboard. The 20 pin internal socket contains two lines of USB 3.0 signal channels, which allows maximum two individual USB 3.0 ports without sharing one channel data bandwidth. USB 3.1 Internal ConnectorDeveloped by Intel, USB 3.1 internal connector cables are designed for connecting motherboard to front panel USB ports. Similar to previous USB 3.0 internal connector, the new generation internal connector also has a 20 pin header version that support single Type C port or dual Type A connections but with a reduced form factor and stronger mechanical latch design. An 40 pin header version internal connector was also introduced to support two full feature Type-C ports. Bitcoin is the currency of the Internet - a distributed, worldwide, decentralized digital money. Unlike traditional currencies such as Dollars, Pounds or Euro's; bitcoins are issued and managed without any central authority whatsoever. There is no government, company, or bank in charge of Bitcoin. Even thought Bitcoin has been around for almost a decade, the idea of Bitcoins is still very confusing to the mainstream. I will attempt to break it down to understand much easily but first a brief history and story on the founding of Bitcoin. Bitcoin is a digital asset and a payment system invented by Satoshi Nakamoto. Nakamoto introduced the idea on 31 October 2008 to a cryptography mailing list, and released it as open-source software in 2009. The system is peer-to-peer and transactions take place between users directly, without an intermediary. These transactions are verified by network nodes and recorded in a public distributed ledger called the block-chain, which uses bitcoin as its unit of account. Since the system works without a central repository or single administrator, the U.S. Treasury categorizes bitcoin as a decentralized virtual currency. Bitcoin is often called the first cryptocurrency, although prior systems existed and it is more correctly described as the first decentralized digital currency. Bitcoin is the largest of its kind in terms of total market value. Bitcoins are created as a reward for payment processing work in which users offer their computing power to verify and record payments into a public ledger. This activity is called mining and miners are rewarded with transaction fees and newly created bitcoins. Besides being obtained by mining, bitcoins can be exchanged for other currencies, products, and services. When sending bitcoins, users can pay an optional transaction fee to the miners. In February 2015, the number of merchants accepting bitcoin for products and services passed 100,000. Instead of 2–3% typically imposed by credit card processors, merchants accepting bitcoins often pay fees in the range from 0% to less than 2%. Despite the fourfold increase in the number of merchants accepting bitcoin in 2014, the cryptocurrency did not have much momentum in retail transactions. The European Banking Authority and other sources have warned that bitcoin users are not protected by refund rights or charge-backs. The use of bitcoin by criminals has attracted the attention of financial regulators, legislative bodies, law enforcement, and media. Criminal activities are primarily centered around dark-net markets and theft, though officials in countries such as the United States also recognize that bitcoin can provide legitimate financial services. Digital theft or hacking of bitcoins has also been an issue for bitcoin users. A major Bitcoin exchange, Bitfinex, was hacked and nearly 120,000 BTC (around US$60M) was stolen in 2016. Bitcoin value crashed and Bitfinex was forced to suspend its trading. Below is a break down of how Bitcoin works. Utilizing a Bitcoin peer-to-peer network, the following is noted.

A proxy server is a computer that offers a computer network service to allow clients to make indirect network connections to other network services. A client connects to the proxy server, then requests a connection, file, or other resource available on a different server. The proxy provides the resource either by connecting to the specified server or by serving it from a cache. In some cases, the proxy may alter the client's request or the server's response for various purposes. Web Proxies: A common proxy application is a caching Web proxy. This provides a nearby cache of Web pages and files available on remote Web servers, allowing local network clients to access them more quickly or reliably. When it receives a request for a Web resource (specified by a URL), a caching proxy looks for the resulting URL in its local cache. If found, it returns the document immediately. Otherwise it fetches it from the remote server, returns it to the requester and saves a copy in the cache. The cache usually uses an expiry algorithm to remove documents from the cache, according to their age, size, and access history. Two simple cache algorithms are Least Recently Used (LRU) and Least Frequently Used (LFU). LRU removes the least-recently used documents, and LFU removes the least-frequently used documents. Web proxies can also filter the content of Web pages served. Some censorware applications - which attempt to block offensive Web content - are implemented as Web proxies. Other web proxies reformat web pages for a specific purpose or audience; for example, Skweezer reformats web pages for cell phones and PDAs. Network operators can also deploy proxies to intercept computer viruses and other hostile content served from remote Web pages. A special case of web proxies are "CGI proxies." These are web sites which allow a user to access a site through them. They generally use PHP or CGI to implement the proxying functionality. CGI proxies are frequently used to gain access to web sites blocked by corporate or school proxies. Since they also hide the user's own IP address from the web sites they access through the proxy, they are sometimes also used to gain a degree of anonymity. You may see references to four different types of proxy servers: Transparent Proxy: This type of proxy server identifies itself as a proxy server and also makes the original IP address available through the http headers. These are generally used for their ability to cache websites and do not effectively provide any anonymity to those who use them. However, the use of a transparent proxy will get you around simple IP bans. They are transparent in the terms that your IP address is exposed, not transparent in the terms that you do not know that you are using it (your system is not specifically configured to use it.) Anonymous Proxy: This type of proxy server identifies itself as a proxy server, but does not make the original IP address available. This type of proxy server is detectable, but provides reasonable anonymity for most users. Distorting Proxy: This type of proxy server identifies itself as a proxy server, but make an incorrect original IP address available through the http headers. High Anonymity Proxy: This type of proxy server does not identify itself as a proxy server and does not make available the original IP address. The average computer user must be aware of the potential threats their computer faces each time they connect to the world wide web. Not only can you pass the information on to others but be able to protect yourself accordingly. This article will cover the differences to some of the most common tech terms everyone has heard some or other time in their lives. Why are they all so different? and what do they all mean? In the old days we used to call everything a virus, however now days we have more precise names to further categorize them. Below I will discuss in the most simplest way of the difference between a virus, spyware, malware, and adware. What Is Malware?Malware is a software program that has bad intentions. It can either be installed by the computer user accidentally or it can sneak into your computer through various avenues. Its not the same as a piece of software that by chance causes harm to your computer, malware is software that has been developed with the intent of causing problems. What Is Spyware? Spyware is a type of malware program that invades your computer and basically spies on you. There are different types of spyware that collect different information. A common spyware type is a keylogger which records keystrokes typed on your keyboard. This is how people lose their bank account details. Other spyware will record your actions and browsing habits on the internet. Any information collected by spyware is usually with the intent to sell. What Is Adware?Adware is another form of malware and is exactly as the name suggests, software with advertising. Adware can be downloaded and sometimes included in free programs. For example Windows Live messenger and Yahoo messenger contain adware. Although some programs give the option not to install the extra adware, others seem to sneak it in without permission. What Is A Virus?A virus is a small program designed to infect your computer and cause errors, computer crashes, and even destroy your computer hardware. Unlike spyware, a virus can grow and replicate itself. It can also travel from one computer to another via an internet connection. You can also get viruses from discs with virus infested files stored on them, however the internet is the most common entry point. Some common symptoms of a virus are emails being sent to all contacts when you didn’t send them, being taken to web-page's that you didn’t choose, or being told you have a virus and to download a program to fix it. Solutions:The most effective way to shield against any type of intrusion is to have your operating firewall enabled, a router with a built in firewall governing your network, a anti-malware and anti-virus program installed, updated and constantly running. These are the steps that must be followed without question. The rest is academic. The more you fill your mind with factual information the better your online experience will be, whether its joining a simple LAN with friends, online multiplayer, surfing social networks or running your own server, in the long run its essential to get to know everything you can about computer security. When I had my first job in IT to wire network cables was one of my first things I had to learn. To remember which color was suppose to go where was always hard to remember. Here is a much simpler diagram and tutorial to help you remember. Straight through cables are used to connect a computer to a hub or switch. If your connection is PC to PC or Hub to Hub then you must use the crossed standard. The following diagrams shows the colour coding for CAT5e cables based on the two standards supported by TIA/EIA. 568B wiring is by far the most common wiring method. There is no difference in connectivity between 568B and 568A cables, so you can choose the method that suits you best providing that both ends of the cable uses the same standard. Crossover cables are used to connect PCs to one another or to connect a hub to hub. However, most hubs these days have an uplink port which allows you to connect to hubs together through this port using a straight connection; you should read your hub manual to check if it has this. The following diagram shows the wiring at both ends of the connectors. It shows crossing of all four pairs. Below is a diagram of the RJ45 connector and pin numbers so you can see which pin numbers relate to the diagrams above. ★ I am often asked what RAID means, how to set it up and how it benefits the user. Here is my explanation on what it all means. From complex to simple. What Is RAID? Known as: "Redundant Array of Inexpensive Disks" or RAID; is a storage technology that combines multiple disk drive components into a logical unit. Data is distributed across the drives in one of several ways called "RAID levels", depending on what level of redundancy and performance (via parallel communication) is required. Most common forms of a RAID array are RAID 0, 1, 5 and rarely used is RAID array 6. RAID O - Disk Striping (process of dividing a body of data into blocks and spreading the data blocks across several partitions on several hard disks). Pros: ★ Option to use a minimum of 2 - 32 HDD's. ★ Multiple drives work as one disk/maximize disk space. IE (4 x 250GB) = 1000GB/1TB solid. ★ Better read/write ability = faster performance. Cons: ★ One hard disk fails, all fail. ★ Keep backups ★ Bits of data stored and not all data itself ★ No redundancy drive or safety RAID 1 - Disk Mirroring (data is written to two duplicate disks simultaneously. This way if one of the disk drives fails, the system can instantly switch to the other disk without any loss of data or service). Pros: ★ Dual copy from 1 hard disk to another. ★ If one drive fails, data is still usable as there is a copy. ★ Faster performance. ★ Able to replace hard disk and rebuild the mirror. Cons: ★ Data storage space does not increase. IE. (2 x 250GB) = 250GB usable & 1 mirrored copy. RAID 5 - Disk Striping w/Parity Pros: ★ At least 3 hard disks needed - 32 hard disks max. ★ Bits written to each drive with 3rd (redundancy drive) having copy of 1. ★ If one fails, others will hold rest of the data / data still usable. ★ Replacing drive will re-construct the array. ★ Faster performance. ★ Increases space partially. ★ Hot swappable. Cons: ★ Only first two disks space increases, 3rd and onwards are redundant drives. RAID 6 - Disk Striping w/Parity Pros: ★ Duplication of RAID 5 with added drive for redundancy. Cons: ★ Gets expensive with adding another drive. Creating RAID Array:

Hardrive RAID: Pros: ★ Hard disk controller/PCI - Express card ★ All hard disks connected to that controller ★ Boot up to similar BIOS Screen to configure drives into preferred array ★ Can stall an operating system on the hard disk array ★ Fast/performance boost Con: ★ Expensive Software RAID: Pros: ★ Must have an operating system available to create the array ★ Good for storing data as redundancy ★ Installing windows server operating system or similar to config a raid of 0-5 etc ★ Less expensive Cons: ★ If the hardisk with the operating system dies, entire PC fails ★ Software raid can slow PC down as it will and/or can use RAM Size vs. Volume In RAID: In RAID 5: Any size hard disk can be used, from 80-1500 +. Drive only bigger than the smaller drives volume; Why? data gets striped over drives according to allocated space configured or preset. Smallest drive is the largest size to use in an array in regards to the other drives. Size & Volume Of Array: Size of an array - refers to total amount of hard disk space allocated for the hard disk array. IE (4 x 250GB) hard disks = 1000GB/1TB. Volume of an array - refers to the amount of usable space IE RAID 1 (mirror) (2 x 250GB) = 250GB volume. IE RAID 5 (4 x 250GB) = 750GB, IE RAID 0 doesn’t apply with volume. That concludes the tutorial. I hope this helps. Any queries feel free to contact me via the contact form right at the top next to the YouTube tab or alternatively you can simple comment on the post below. The tutorial is very simple and it will help you to be able to write protect any flash drive. This helps when your device is stolen or somebody wishes to delete your sensitive material without you knowing about it. The tutorial will concentrate on (1) How to write protect a flash drive; (2) How to remove write protection. The flash drive I will be using as a test device is a 8GB USB 2.0 Pen Cap Apacer AH322 Flash Drive & the operating system I will be using is Microsoft Windows 7 Ultimate x86. Enable Write Protection:Start this process by plugging your USB flash drive into and open USB port on your laptop or desktop computer. Wait for your computer to successfully read the new device and follow the steps below:

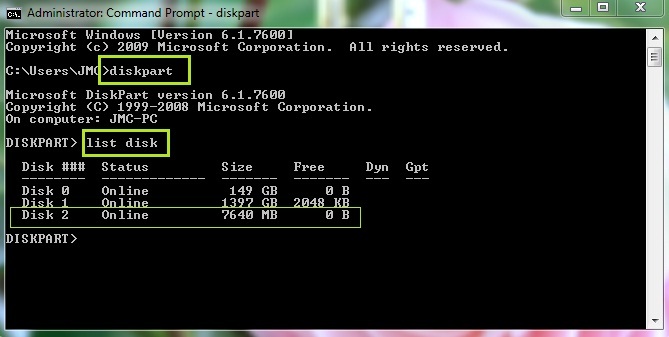

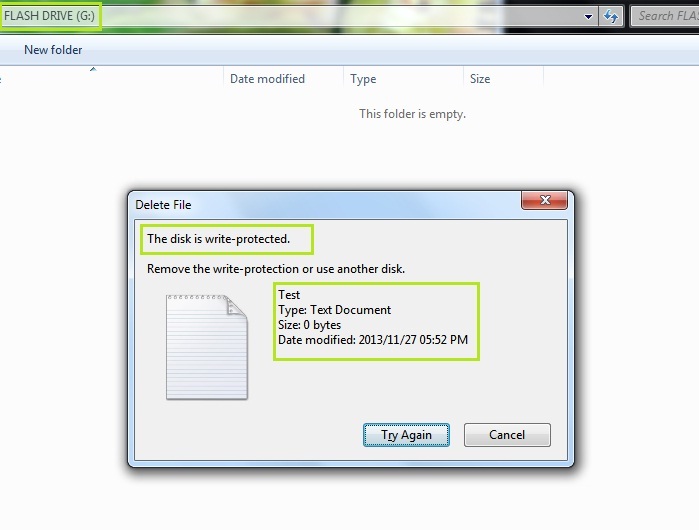

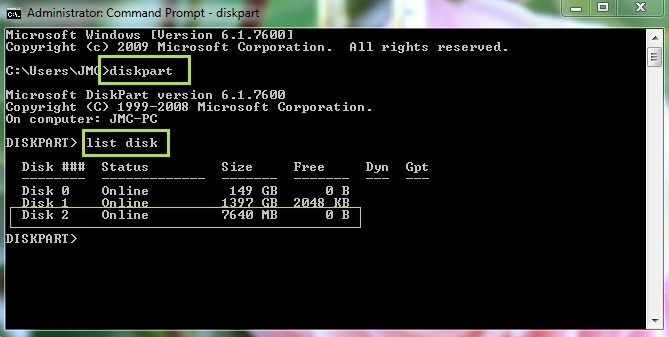

The following window below will appear - Type the following: diskpart Hit enter - Type the following: list disk From this short menu you will see a detailed list of storage devices plugged into the computer including the flash drive and the main storage drives for the operating system. What you want to identify here is the flash drive. Below I have identified the test flash drive. According to the list in the above image, the flash drive I am using is identified as: Disk 2 with a size of 7640 MB. Knowing that the flash drive is an empty 8GB drive & being aware of the other two drives I can safely assume that Disk 2 refers to the flash drive. - Type the following: select disk 2 You will receive a confirmation message stating: Disk 2 is now the selected disk - Type the following: attributes disk set readonly You will receive a confirmation message stating: Disk attributes set successfully Below is an example of the above mentioned. To test if your flash drive has been write protected, head over to Computer, find your flash drive, open the contents up and try deleting any file to test. You will receive an error like the one below. Now you have successfully write protected your flash drive. Nobody will be able to Delete, format or remove any files. - Type the following: exit This will close up the section of disk part used to protect devices. You may now exit the command prompt menu. Remove Write Protection:If you wish to remove the write protection from your flash drive follow the steps below.

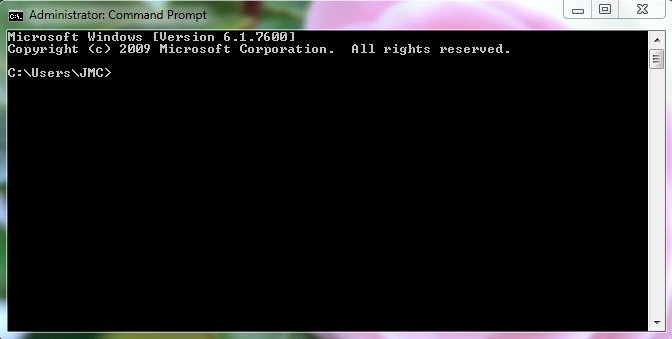

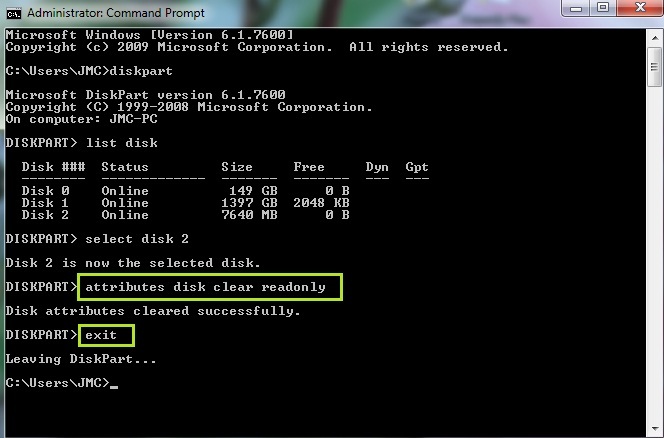

- Type the following: diskpart Hit enter - Type the following: list disk You will once again see a detailed list of storage devices plugged into the computer including the flash drive and the main storage drives for the operating system. Identify the flash drive. Below I have identified the test flash drive again. - Type the following: select disk 2 You will receive a confirmation message stating: Disk 2 is now the selected disk - Type the following: attributes disk set readonly You will receive a confirmation message stating: Disk attributes set successfully Below is an example of the above mentioned. - Type the following: attributes disk clear readonly You can once again head to Computer, find your flash drive and you may now delete anything you wish. - Type the following: exit You may now close the command prompt window. This concludes the tutorial. I hope you find this useful. If you have any issues, feel free to comment on this post and/or send me an email. What Is Ethical Hacking? Ethical Hacking is process of finding and exploiting vulnerabilities in a system and then rectifying them to protect them from other hackers. Person performing ethical hacking is a person who has ethical hacking certification and holds good knowledge about computers, networks, programming and various vulnerabilities. His work is to secure his own network and protect it from all sort of cyber attacks. What Is A Hacker? An enthusiastic and skillful computer programmer or user who uses his skill set to bypass and improve a program, game or programming language also includes; someone messing about with something in a positive sense, that is, using playful cleverness to achieve a goal. There is a community, a shared culture, of expert programmers and networking wizards that traces its history back through decades . The members of this culture originated the term ‘hacker’. Hackers built the Internet; Hackers made the Unix operating system what it is today; Hackers run Usenet; Hackers make the World Wide Web work. The hacker mind-set is not confined to this software-hacker culture. There are people who apply the hacker attitude to other things, like electronics or music. You can find it at the highest levels of any science or art. There is another group of people who loudly call themselves hackers, but aren't. These are people (mainly adolescent males) who get a kick out of breaking into computers. Real hackers call these people ‘crackers’ and want nothing to do with them. Unfortunately, many journalists and writers have been fooled into using the word ‘hacker’ to describe crackers; this irritates real hackers to no end. What Is A Cracker? A cracker is someone who breaks into someone else's computer system, often on a network; bypasses passwords or licenses in computer programs; or in other ways intentionally breaches computer security. A cracker can be doing this for profit, maliciously, for some altruistic purpose or cause, or because the challenge is there. Some breaking-and-entering has been done ostensibly to point out weaknesses in a site's security system. The basic difference is this: hackers build things, crackers break them. Types of Hackers based on their knowledge: Coders: Coders are real hackers. They are programmers having immense knowledge about many programming languages, networking and working of programs. They are skilled programmers who can find vulnerabilities on their own and create exploits based on those vulnerabilities. They can code their own tools and exploit and can modify existing tools according to their use. Admins: These are the computer guys who are not sound enough in programming but holds enough information about hacking and networking. These guys have Hacking certifications and can hack any system or network with the help of tools and exploit created by codes. Majority of security consultants fall in this group. They are Certified Ethical Hackers who are trained for securing networks. Script Kiddies: This is the most dangerous type of hackers. These type of hackers does not actually know what they are doing. They just use the tools and partial knowledge they gain from internet to attack systems. They do it just for fun purpose and to be famous. They use the tools and exploits coded by other hackers and use them. They have minimum skills. Types of Hackers based on their motive of hacking: ★ White Hat Hackers: White hat hackers are ethical hackers with some certifications such as CEH( Certified Ethical Hacker). They break into systems just for legal purposes. Their main motive is to find loopholes in the networks and rectifying them. These type of hackers work with famous companies in securing their systems and protecting them against other hackers. ★ Black Hat Hacker: A black hat hacker may or may not have any hacking certification but they hold good knowledge about hacking. They use their skills for destructive purposes. They break into systems and networks either for fun or to gain some money from illegal means. They gain unauthorized access and destroy/steal confidential data or basically cause problems to their target. ★ Gray Hat Hacker: A grey hat hacker is a combination of a Black Hat and a White Hat Hacker. A Grey Hat Hacker may surf the internet and hack into a computer system for the sole purpose of notifying the administrator that their system has been hacked. Then they may offer to repair their system for a small fee. A little lesson in UNIX. Many people refer to a open source UNIX operating system as a Linux operating system. This is incorrect. Referring to Linux as an operating system or the predominant name for all things UNIX is a common mistake and problem. What it is actually called is GNU/Linux or GNU plus Linux. Linux is not an operating system unto itself, but rather another free component of a fully functioning GNU system made useful by the GNU core-libs, shell utilities and vital system components comprising a full OS as defined by POSIX. Many computer users run a modified version of the GNU system every day, without realizing it. Through a peculiar turn of events, the version of GNU which is widely used today is often called "Linux", and many of its users are not aware that it is basically the GNU system, developed by the GNU Project. Linux is the kernel: the program in the system that allocates the machine's resources to the other programs that you run. The kernel is an essential part of an operating system, but useless by itself; it can only function in the context of a complete operating system. Linux is normally used in combination with the GNU operating system: the whole system is basically GNU with Linux added, or GNU/Linux. All the so-called "Linux" distributions are really distributions of GNU/Linux. GNU:Richard Matthew Stallman (born March 16, 1953), often shortened to RMS, is an American software freedom activist and computer programmer. In September 1983, he launched the GNU Project to create a free Unix-like operating system, and he has been the project's lead architect and organizer. With the launch of the GNU Project, he initiated the free software movement; in October 1985 he founded the Free Software Foundation. Stallman pioneered the concept of copyleft, and he is the main author of several copyleft licenses including the GNU General Public License, the most widely usedfree software license. Since the mid-1990s, Stallman has spent most of his time advocating for free software, as well as campaigning against software patents,digital rights management, and what he sees as excessive extension of copyright laws. Stallman has also developed a number of pieces of widely used software, including the original Emacs, the GNU Compiler Collection, the GNU Debugger, and various tools in the GNU coreutils. He co-founded the League for Programming Freedom in 1989. GNU is a Unix-like computer operating system developed by the GNU project, ultimately aiming to be a "complete Unix-compatible software system" composed wholly of free software. Development of GNU was initiated by Richard Stallman in 1983 and was the original focus of the Free Software Foundation (FSF), but no stable release of GNU yet exists as of September 2010. The FSF maintains that Linux, when used with GNU tools and utilities, should be considered a variant of GNU, and promotes the term GNU/Linux for such systems, (leading to the GNU/Linux naming controversy). Linux:Linus Benedict Torvalds (born December 28, 1969) is a Finnish American software engineer and hacker, who was the principal force behind the development of the Linux kernel. He later became the chief architect of the Linux kernel, and now acts as the project's coordinator. He also created the revision control system Git. He was honored, along with Shinya Yamanaka, with the 2012 Millennium Technology Prize by the Technology Academy Finland "in recognition of his creation of a new open source operating system for computers leading to the widely used Linux kernel". Linux was coined by the creator of the Linux Kernel, Linus Torvalds. The defining component of Linux is the Linux kernel, an operating system kernel first released October 5, 1991 by Linus Torvalds. The Linux Kernel is an operating system kernel used by the Linux family of Unix-like operating systems. It is one of the most prominent examples of free and open source software. Linux operating systems is also another incorrect term. The correct term is Unix or GNU/Linux Distributions. The Founding Father's of All Things Unix are Richard Stallman, Linus Torvalds, Andrew S. Tanenbaum, Bruce Perens, Michael Tiemann, Eric S. Raymond. Each played a vital role in the development of what we know today as the world of GNU/Linux. |

Archives

October 2022

Categories

All

|

RSS Feed

RSS Feed